The post Walking with Dinosaurs appeared first on synvert Data Insights.

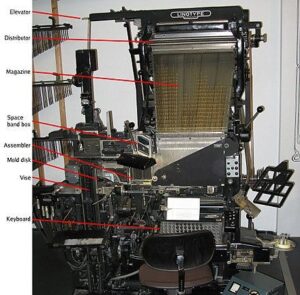

]]>That journey really starts with my Father, his job at the time was maintaining linotype and intertype machines for the local newspaper.

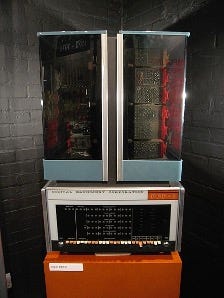

As you will see from the link these are mechanical machines using hot metal to produce typeset blocks that can be used in a printing press. As technology advanced, so did my Father´s role and he eventually became head of the digital systems department when all the mechanical systems were replaced by computers. This led to my introduction to my first computer – a PDP-8 in 1978.

This computer had no screen, no keyboard and no mouse (a mouse controlling a computer was first demonstrated a decade before in 1968 but they still weren´t generally available), instead, just a series of switches and lights on the front panel. It also made use of magnetic core memory, and it was my first introduction to binary.

In 1980 (I was 14) Sir Clive Sinclair released the ZX80

and although my Father ordered one as a kit to build himself, it never arrived so instead he ordered a microtan-65.

This computer plugged into a standard TV and came with a hexadecimal keypad – the full keyboard didn´t appear for another year or so. This was my introduction to 6502 microprocessors, BASIC and machine code.

By 1982, my school friends all seemed to be in the ZX80/81 and spectrum camp, I was a little out of step on “cool” games to play as we moved from the microtan into another family of 6502 processors, namely the Commodore Vic-20 and then the Commodore 64.

This was the era of loading games from cassette tape. The spectrum made use of a standard tape player whereas the Vic-20 required a specific and thus more expensive dedicated tape player. The disadvantage of the spectrum was that the loading was very susceptible to the volume – too loud or too quite and the game wouldn´t load; the commodore didn´t suffer from this issue. The next innovation for our Commodore 64 was the addition of a Commodore 1541 5.25 floppy drive. This had a capacity of 170 kilobytes.

This year was also my first tentative steps into writing computer games. One of my friends had a ZX81, with a graphics expansion pack (quite an expensive item at the time) and additional 4kB memory. As we had both just watched the Clint Eastwood movie Firefox, we decided to write a game based on this movie. The game like the movie came in two sections – getting to the aircraft and then stealing it and flying to a friendly zone. We did this as a text based adventure that led to a flight simulator, we even had rear view cameras displaying the enemy jets. It sounds great, but in reality I´m not cut out for writing adventure games and the graphics expansion pack required to play the game cost a considerable amount so we didn´t gain any interest in selling it – probably for the best.

At this time my school had an agreement with the local University, they had a Nord ND500, and we could send them hand written programs written in ForTran 4 on preformatted forms, they would type the code in and a week or so later, the output along withe the punch cards would be returned. I very quickly learned to double check my spelling and coding before sending the forms in the post – lest after a week of waiting the returned output said “syntax error line 7”. Such events led to an appreciation of quality control and testing. The following year we gained a direct teletype link to the university computer so I was able to type my code directly after school. My school also had a Research Machines 380Z

but I didn´t like it as I´d grown up on 6502 processors, and the 380Z was a Z80 processor so the instruction set was different.

At home, following the Comodore 64, there was really only one option available for the next system, a Commodore Amiga 500 followed by an Amiga 2000.

These both used a 68030 processor. I was able to expand the 2000 to include a bridgeboard for an Intel x86 and this was my first introduction to MS-DOS.

Now into 1984 when I was 18, and my next computer system, a Commodore Pet.

This particular model came with the very useful Commodore 4040 twin 5.25 inch floppy drives. Although the small built-in green screen took a little getting used to, I used this system to write and sell my first commercial software, written entirely in BASIC. Back in 1982, the UK national newspaper Daily Mail launched a bingo game played through the newspaper with a large cash prize, but due to an issue in the production of the numbers, thousands of people ended up winning a share of the prize, with each person getting around £2 each (BBC Archive).

In 1984, the local newspaper (The Hull Daily Mail) had decided to try the idea again so I was tasked with writing the code to produce the cards and run the games. The dual floppy drive came in very handy as I could run the program on one disk and maintain the game database on the other. Now this is 1984 and there weren´t any database systems available — at least not one that would run in a 170kB 5.25 floppy on a Commodore Pet, so I wrote my own file based database. The game was played in testing for four months before being approved for publication, sadly at this time the Gaming Commission refused permission to run the game so eventually it was shelved unused.

In 1989 I was 23 and I joined BP Chemicals Hull as a freelancer and eventually joined a project called Utilities Control System (UCS). This project was to computerise the entire chemicals site and so modernise how the engineers and operators managed and ran the site. This was my first introduction to Digital VAX and the OpenVMS operating system. All the code I wrote here was in ForTran 77 although we did have a short project running in Prolog. In 1996, I went back to freelancing and stayed with OpenVMS running on VAX and Alpha systems until 2009.

In 2009 I was working at NYSE Euronext in London, still using Alpha and OpenVMS and coding in Pascal. Management made a decision in 2009 to migrate from the 30 year old Pascal system to a modern, extensible, high performance system and Ab Initio was chosen. I transferred over to that team and the rest is as they say, history, I have now been working with Ab Initio for over 16 years. At this point I have run out of old computer systems, as Ab Initio will pretty much run on anything, cloud or on-premise.

NYSE Euronext closed down at the end of 2014 and still freelance I moved first to Edinburgh, then to Brussels. Finally in 2021, I was offered a permanent role with synvert Data Insights, a decision I´m still very happy I took. I now live in Munich as a permanent resident, my German language level isn´t great but I´m working on it and I´m looking forward to the coming years helping synvert Data Insights clients get the most benefit from their data.

The post Walking with Dinosaurs appeared first on synvert Data Insights.

]]>The post Überblick über die aktuell verfügbaren Tabellentypen in Snowflake appeared first on synvert Data Insights.

]]>Snowflake bietet eine Vielzahl von Tabellentypen, die für unterschiedliche Anwendungsfälle und Anforderungen optimiert sind. Hier ist eine Übersicht über die wichtigsten Tabellentypen inklusive Beispielen:

-

Permanente Tabellen (Permanent Tables)

Beschreibung: Diese Tabellen speichern Daten dauerhaft und sind für langfristige Speicherung und Abfragen konzipiert.

Anwendungsfälle: Geeignet für Produktionsdaten, die regelmäßig abgefragt und analysiert werden.

Beispiel:

CREATE TABLE my_permanent_table (

id NUMBER,

name STRING,

created_at TIMESTAMP

);

-

Temporäre Tabellen (Temporary Tables)

Beschreibung: Temporäre Tabellen speichern Daten nur für die Dauer einer Benutzersitzung. Nach dem Ende der Sitzung werden die Daten automatisch gelöscht.

Anwendungsfälle: Ideal für Zwischenspeicherung von Daten während ETL-Prozessen oder für sitzungsspezifische Berechnungen

Beispiel:

CREATE TEMPORARY TABLE my_temp_table (

id NUMBER,

session_data STRING

);

-

Transiente Tabellen (Transient Tables)

Beschreibung: Diese Tabellen bieten eine kostengünstige Lösung für die Speicherung von Daten, die nicht dauerhaft benötigt werden. Sie unterstützen keine Time Travel-Funktionalität.

Anwendungsfälle: Nützlich für temporäre Daten, die länger als eine Sitzung benötigt werden, aber keine langfristige Speicherung erfordern

Beispiel:

CREATE TRANSIENT TABLE my_transient_table (

id NUMBER,

temp_data STRING

);

-

Externe Tabellen (External Tables)

Beschreibung: Externe Tabellen ermöglichen den Zugriff auf Daten, die außerhalb von Snowflake in externen Speichersystemen (z.B. AWS S3, Google Cloud Storage) gespeichert sind.

Anwendungsfälle: Perfekt für die Integration und Analyse von Daten, die in externen Quellen gespeichert sind, ohne sie in Snowflake zu replizieren

Beispiel:

CREATE EXTERNAL TABLE my_external_table (

id NUMBER,

data STRING

)

WITH LOCATION = @my_stage

FILE_FORMAT = (TYPE = ‘CSV’);

-

Hybridtabellen (Hybrid Tables)

Beschreibung: Diese Tabellen bieten optimierte Leistung für sowohl Lese- als auch Schreiboperationen und sind für transaktionale und hybride Workloads ausgelegt.

Anwendungsfälle: Geeignet für Anwendungen, die sowohl OLTP- als auch OLAP-Workloads erfordern

Beispiel:

CREATE OR REPLACE HYBRID TABLE my_hybrid_table (

id NUMBER PRIMARY KEY,

name STRING NOT NULL,

value STRING

);

- Iceberg-Tabellen (Iceberg Tables)

Beschreibung: Iceberg-Tabellen verwenden das offene Tabellenformat Apache Iceberg und bieten Funktionen wie ACID-Transaktionen und Schema-Evolution.

Anwendungsfälle: Ideal für die Verwaltung großer Datenmengen in einem externen Cloudspeicher mit erweiterten Datenverwaltungsfunktionen

Beispiel:

CREATE OR REPLACE ICEBERG TABLE my_iceberg_table (

id NUMBER,

data STRING

)

WITH LOCATION = ‘s3://my-bucket/my-path/’

FILE_FORMAT = (TYPE = ‘PARQUET’);

Fazit

Die Wahl des richtigen Tabellentyps in Snowflake hängt stark von den spezifischen Anforderungen und Anwendungsfällen ab. Permanente Tabellen sind ideal für langfristige Speicherung, während temporäre und transiente Tabellen für kurzlebige Daten nützlich sind. Externe Tabellen ermöglichen die Integration externer Datenquellen, und Iceberg-Tabellen bieten erweiterte Funktionen für die Verwaltung großer Datenmengen.

The post Überblick über die aktuell verfügbaren Tabellentypen in Snowflake appeared first on synvert Data Insights.

]]>The post Data Transfer AWS – SAP HANA appeared first on synvert Data Insights.

]]>Due to the strengths of these two platforms, it is natural for companies to seek the best of both worlds and establish a connection between them. Often, data stored in SAP needs to be transferred to AWS for back-ups, machine learning or some other advance analytics capabilities. Conversely, the information processed in AWS is frequently needed back in SAP system.

In this post we will explain different approaches to transfer data between these two platforms.

Using AWS Environment

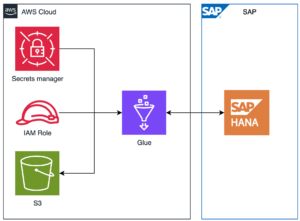

Connect AWS and SAP HANA using Glue

This entrance will show how to create a connection to SAP HANA tables using AWS Glue, how to read data from HANA tables and store the extracted data in S3 bucket in CSV format and of course how to read a CSV file stored in S3 and write the data in SAP HANA.

Pre-requisites:

-

HANA Table created

-

S3 Bucket

-

Data in CSV format (only to import data to SAP HANA)

-

SAP Hana JDBC driver

-

Components Creation

Secrets Manager

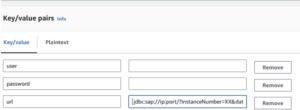

Let’s start with storing the HANA data for the connection in AWS Secrets Manager

-

user

-

password

-

url

*All this information should be provided by HANA system

Go to Secrets in the AWS console and create a new Secret, select Other type of secret and add the keys with the corresponding value.

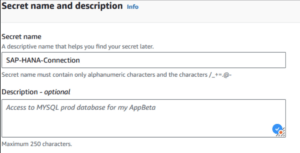

Go to next page and make sure to give this Secret a descriptive name, it is a good practice to also add a description, but this is totally optional.

IAM Role & policy

It is needed a role with access to the S3 bucket, Glue components and Secrets Manager data for the connection. AWS roles work with policies that are created separately and attached after. It is recommendable the creation in this order so we can add the minimum permissions needed for this process to work.

Policy creation

Under policy, click on create policy and select Secrets Manager, allow the following access:

-

GetSecretValue

Under Resources, make sure to add the ARN of the Secrets we created previously.

Add more permissions at the bottom and select S3, allow the following access:

-

ListBucket

-

GetObject

-

PutObject

Under Resources, make sure to add the ARN of the S3 Bucket where the data and driver connector are stored.

Add one more access for Glue and allow the following access:

-

GetJob

-

GetJobs

-

GetJobRun

-

GetJobRuns

-

StartJobRun

-

GetConnection

-

GetConnections

Under Resources, make sure to add the ARN.

In order to monitor our Job, we must add the permission for logs. Add CloudWatch Logs with the following access:

-

DescribeLogGroups

-

CreateLogGroup

-

CreateLogStream

-

PutLogEvents

Optional: You can use the predefined policy AWSGlueServiceRole instead, with this, the access to Glue and CloudWatch be allowed.

Role

To create the IAM Role, go to IAM in the AWS console and select Roles on the left panel, click on Create Role and select the AWS Service option.

Then, in Use Case section, select Glue, since this role will be mainly used for Glue.

The next step is to add permissions to our Role, for this it is recommended to use specific policies that includes the minimum accesses that our Role need, you can select the policy if you have it already created, otherwise you can just click on next and attach it once you created it.

In the next step we will be asked for the Name of this Role, here it is recommended to use a descriptive name such as SAP-HANA-DataTransfer-Role a good description it is also recommended, to explain the purpose of the Role.

Glue

Glue is a flexible tool that will allow to connect to SAP HANA using different methods, first I would like to point out that Glue counts with Glue Connector feature, which is an entity that contains data like user, url and password, this can also be connected to Secrets Manager to avoid exposing sensitive data. It is possible to summon this data in the glue job to add a layer of abstraction to the project. However, this is not the only way to create a connection, it is also possible to create the connection within the glue job using a JDBC driver, this is more transparent on how the connection is made, which can be useful in some scenarios.

Another feature of Glue is Glue Studio Visual ETL, with this feature you could develop an ETL process in a visual way, by using the drag and drop function you can select predefined blocks divided in three categories: Source, Transformation and Target.

The traditional way to create a Glue Job is with the Script Editor option, by selecting this option you get a text editor where you can code.

This document will present the following scenarios.

-

Glue Connection + Glue Job using Visual ETL

-

Glue Connection + Glue Job using Script Editor

-

Glue Job with embedded JDBC connection

Glue connection

The first step is to create a connection from AWS to SAP, for this we are going to use a Glue connector feature. On the left in the AWS Glue console, select Data connections and then Create connection.

This will open a wizard and will show different options for data sources, select SAP HANA.

Provide the URL and choose the secret created in previously.

Give a descriptive name to the connection and create the resource.

Glue Job

A Glue Job will be our bridge to connect to SAP Hana, as mentioned before there are different ways to create a Glue Job using the console, using Visual Editor and using Script Editor

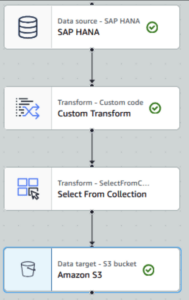

Glue Connection + Glue Job using Visual ETL

Export HANA Table to S3

-

Create a Glue Job Visual ETL

-

Select SAP HANA in Sources

-

Select S3 in Targets

-

Add Custom Transform and select SAP HANA as Node Parent

-

Add Select from Collection and select Custom Transform as Node Parent

-

Configure the source:

-

On the right panel Data Source properties:

-

Select the SAP HANA connection

-

Provide the table to connect schema_name.table_name

-

-

Under Data Preview select the IAM Role created

A preview of the data contained in the table will be shown.

Configure the target:

-

On the right panel Data Target properties:

-

select the format CSV

-

Provide the S3 path where the CSV file is going to be stored

-

To avoid the content to split in multiple files, a transformation and selection are needed to condense everything into one single file.

-

-

Confirm that Amazon S3 has Select from collection as parent node

Add the code to the transformation module:

def MyTransform (glueContext, dfc) -> DynamicFrameCollection:

df = dfc.select(list(dfc.keys())[0]).toDF()

df_coalesced = df.coalesce(1)

dynamic_frame = DynamicFrame.fromDF(df_coalesced, glueContext, "dynamic_frame")

return dynamic_frame

Save & Run

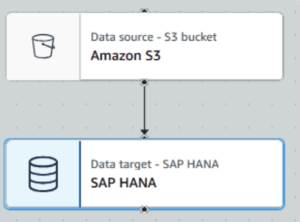

Import CSV file to HANA

-

Create a Glue Job Visual ETL

-

Select S3 in Sources

-

Select SAP HANA in Targets

Configure the source

-

On the right panel Data Source properties:

-

Provide the S3 URL where the csv file is stored s3://bucket_name/prefix/file

-

Select CSV format and set the configuration according to your file

-

-

Under Data Preview select the IAM Role created

- A preview of the file content will be shown

Configure the target:

-

On the right panel Data Sink properties:

-

select Amazon S3 as Node Parent

-

Provide the table to connect schema_name.table_name

Note: If the table does not exist and your users has enough privileges, the table will be created.

-

Save & Run

Glue Job using Script Editor

-

Create a Glue Job Script editor

-

Select Spark as Engine

-

Select Start fresh and use the following code

> Export HANA Table to S3 – Using Glue Connection

##### Read from HANA

####Option 1

df = glueContext.create_dynamic_frame.from_options(

connection_type="saphana",

connection_options={

"connectionName": "Name of the connection",

"dbtable": "schema.table",

}

)

####Option 2

df = glueContext.create_dynamic_frame.from_options(

connection_type="saphana",

connection_options={

"connectionName": "Name of the connection",

"query": "SELECT * FROM schema.table"

}

)

##Condense into one single file

df_coalesced = df.coalesce(1)

#####Write CSV in S3

glueContext.write_dynamic_frame.from_options(

frame=df_coalesced ,

connection_type="s3",

connection_options={"path": "s3://bucket/prefix/"},

format="csv",

format_options={

"quoteChar": -1,

},

)

> Import CSV file to HANA – Using Glue Connection

Read CSV File

dynamicFrame = glueContext.create_dynamic_frame.from_options(

connection_type="s3",

connection_options={"paths": ["s3://bucket/prefix/file.csv"]},

format="csv",

format_options={ "withHeader": True,

},

)

###Write into SAP HANA

glueContext.write_dynamic_frame.from_options(

frame=dynamicFrame,

connection_type="saphana",

connection_options={

"connectionName": "Connection_Name",

"dbtable": "schema.table"

},

)

> Import/Export using JDBC Connection

####Export HANA Table to S3

df = glueContext.read.format("jdbc")

.option("driver", jdbc_driver_name)

.option("url", url)

.option("currentschema", schema)

.option("dbtable", table_name)

.option("user", username)

.option("password", password)

.load()

df_coalesced = df.coalesce(1) # to create only one file

df_coalesced.write.mode("overwrite")

.option("header", "true")

.option("quote", "\u0000")

.csv("s3://bucket_name/prefix/")

###Import CSV file to HANA

df2 = spark.read.csv(

"s3://bucket/prefix/file.csv",

header=True,

inferSchema=True)

# Write data in SAP HANA

df2.write.format("jdbc")

.option("driver", jdbc_driver_name)

.option("url", url)

.option("dbtable", f"{schema}.{table_name}")

.option("user", username)

.option("password", password)

.mode("append")

.save()

4. Go to Job Details

-

-

Give a name

-

Select the IAM Role

-

Advance properties

-

Add the SAP Hana Connection

-

-

-

Save & Run

Using HANA Environment

Exporting HANA tables to AWS S3

This documentation will show an option to export data from SAP HANA tables to S3 storage using a CSV file.

Pre-requisites:

-

HANA Table

-

S3 Bucket

-

AWS Access key & Secrets Key

-

AWS Certificate

Configure AWS Certificate in HANA

It is needed to add an AWS certificate as a trusted source in order to export the data.

-- 1. Create certificate store SSL

CREATE PSE SSL;

-- 2. Register S3 certificate

CREATE CERTIFICATE from 'AWS Certificate Content' COMMENT 'S3';

-- 3. Get the certificate ID

Select * from certificates where comment = 'S3';

-- 4. add S3 certificate to SSL certificate store

ALTER PSE SSL ADD CERTIFICATE CERTIFICATE_NUMBER; -- the certificate number is taken from select statement result in step 3.

SET PSE SSL PURPOSE REMOTE SOURCE;

Export Data – GUI Option

SAP software has the option to export data to several cloud sources by right-clicking on the desired table to export.

It shows a wizard to select:

-

Data source: Schema and Table/View

-

Export Target: Amazon S3, Azure Storage, Alibaba cloud

-

Once we select Amazon S3, it will ask for the S3 Region and S3 Path. This information can be consulted in AWS Console, in the Bucket where we want to store our exported data.

-

By clicking on the Compose button it’ll show a prompt to write the Access Key, Secret Key, Bucket Name and Object ID

-

![]()

-

Export options: It defines the format CSV/PARQUET and configuration of the CSV file generated.

Finally we can export the file by clicking on Export button and it should be uploaded in our S3

Export Data – SQL Option

However, we’ll get the same result if we run from the SQL console in SAP HANA the following query:

EXPORT INTO 's3-REGION://ACCESSKEY:SECRETKEY@BUCKET_NAME/FOLDER_NAME/file_name.csv' FROM SCHEMA_NAME.TABLE/VIEW_NAME;

It is possible to import CSV files stored in S3 by using the IMPORT Statement

IMPORT file_name 's3-REGION://ACCESSKEY:SECRETKEY@BUCKET_NAME/FOLDER_NAME/file_name.csv';

Conclusion

It is possible to have the best of both worlds by using these solutions. The solutions mentioned can be applied in different circumstances, the process of exporting data using SAP system is advisable when a direct connection its needed to offload datasets for backups or data lake solutions. This allows to get a better cost control since the storage in S3 can be easily controlled with the different types of storage tiers exiting in AWS.

On the other hand, using AWS Glue is convenient when an automated process of the extraction of the data is required, in addition, Glue can transform and load the data into S3 where it can be processed for machine learning or data warehousing.

In summary, combining SAP HANA’s export capabilities with AWS Glue’s data transformation tools enables efficient and scalable data management.

The post Data Transfer AWS – SAP HANA appeared first on synvert Data Insights.

]]>The post Azure Data MLOps Pipeline appeared first on synvert Data Insights.

]]>

Introduction

MLOps combines machine learning lifecycle management with the infrastructure code and processes of DevOps. It bridges the gap between data science and operations by automating the ML lifecycle, enabling continuous integration, deployment, and monitoring leading to iterative improvements of ML Models. This helps in standardizing and streamlining the machine learning lifecycle, ensuring reproducibility and scalability of ML workflows. Moreover, this accelerates the process of taking ML models from development to production and reducing time-to-market for AI drive solutions. MLOps ensures model reliability, governance, and compliance vital in regulated industries and mission-critical applications.

Challenges

Without MLOps, organizations face various challenges impacting the productivity and outcome of these projects. One significant issue is the extensive time spent on manual processes in data preprocessing to model deployment and monitoring. These are not only time consuming but also prone to errors. Moreover, without automated tools, Scaling and managing data pipelines becomes inefficient as the complexity of operations increases with increasing volume and variety of data. Therefore embracing MLOps is a critical step towards agility and maintaining a competitive edge in the market.

Our Proposed Solution

We are going to focus on Azure ML Service and MLFlow and how our Automated Solution built on it could help to overcome the above challenges in Data Science Projects. Our Goal of this Project is to automate the production of an MLOps Pipeline which will enable generic reusability for various ML Use cases. Using MLFlow with AzureML allows us to leverage its capabilities within the Azure ecosystem. This helps us to track Azureml experiments and log various metrics like accuracy, precision, etc which provides a transparent view of our models’ performance and helps us to compare between different applications with ease. Our Proposed Solution consists of two parts.

Infrastructure

In the first part, we use Terraform to create the required infrastructure for deploying ML use cases in Azure.

Azure ML Pipeline

This part consists of the MLOps pipeline using Python SDK. We start with the configuration of the AML Workspace to interact with the Azure Services. Then we do the environment setup by defining a conda specification file, enabling consistent package installations across different compute targets. The next step is to configure the Compute Target using GPU-based instances for executing ML workloads. The Compute cluster scales dynamically based on the workload demands. Once the setup is completed, We start with fetching datasets for ML Training, AzureML simplifies data management through Datastores, We register an Azure Blob Container as a Datastore, facilitating easy access to datasets stored in Blob Storage.

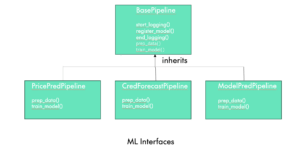

The core of this solution lies in these 4 subsequent steps from Data Preprocessing, Training, Evaluation, and Deployment as Inference endpoint. We adopted an object-oriented approach by encapsulating ML functionalities into reusable classes and methods. This helped us to achieve modularity, reusability, and extensibility. The methods defined in the base class could be redefined and overridden by the Child Class as per the requirements. To demonstrate this capability, we have abstracted the reusable components of this pipeline inside mlops/src/ml_pipeline_abstractions. It contains a base pipeline and a child pipeline class. The methods defined in the BasePipeline Class can be overridden by the Child Pipeline definitions.

Data Preprocessing

The Preprocessing step retrieves the AzureML Datastore which is connected to the Blob Storage. After retrieval, it loads the dataset as an AzureML Tabular Dataset. Subsequently, we filter out the headers and indexes from the data before deploying it in the training function. This step could be further customized by allowing clients to create their version of the `prepare_data` method in the Child Class definition

Model Training

In the Model Training step, we offer a combination of custom and predefined training functions encapsulated within modular classes. This allows flexibility to clients who prefer to utilize their proprietary algorithms. Clients can seamlessly integrate their unique training functions by incorporating them into the utils package and toggling the option to employ a custom training function. Simultaneously, our system allows for the selection of a primary metric to guide model performance evaluations. Clients can choose from a comprehensive suite of metrics, including accuracy, precision, recall, f1 score, ROC AUC, and the confusion matrix. The chosen primary metric is logged into the Azure Machine Learning (AML) Workspace, ensuring a transparent and comparative assessment of model performance.

Model Evaluation

In the subsequent evaluation phase, our focus shifts to the comparison of this primary metric across all trained models. Through this comparison, we identify the model with the best performance within the model registry. This model is then added with a “production” tag, signifying its readiness for deployment.

Model Deployment

The final phase is the deployment phase where the best model is chosen by filtering on the ‘production’ tag. The model is deployed as a real-time endpoint to provide a scalable and accessible web service for machine learning inference.

Workflow of ML Pipeline

Auto ML

We have also provided integration with Azure AutoML capabilities. This enables our client to train their datasets using a range of models. This can be achieved by setting up the Automl flag to true. After training, this process culminates in a detailed comparison between the trained models, highlighting their performance metrics. We can define our primary metric while configuring the AutoML function, Accordingly, the pipeline selects the best-performing model. In the next steps, we register this model to the registry and deploy it as an inference end-point.

By incorporating AutoML, we empower our clients to harness the power of machine learning without the need for extensive expertise and help them leverage their datasets effectively.

Integration with Azure DevOps for CI/CD

The Azure DevOps CI/CD pipeline automates the MLOps process by orchestrating a sequence of tasks to ensure the smooth deployment of ML Models. A set of predefined variables is set to configure the environment which is utilized for the subsequent tasks. At first, authentication is set using a service connection that gives access to our Azure subscription and its resources. The next step is to deploy Terraform to provision the necessary infrastructure as described above. Subsequently Python environment is set and the MLOps pipeline Python script is executed. This completes the end-to-end MLOps workflow deployment. We have integrated AzureDevOps with Github, This ensures that our Azure pipeline is triggered every time there is a commit in the master branch of the Github repository.

Conclusion

It can be concluded that the above MLOps project stands as an accelerator streamlining the transition from experimental machine learning to robust production systems. The modularity in selecting metrics, training functions, Automl, etc ensures a very flexible and agile solution that will help our clients accelerate their AI-driven products and services development. It will provide an overall competitive advantage in the market driven by high-performing, reliable, and scalable machine learning solutions.

The post Azure Data MLOps Pipeline appeared first on synvert Data Insights.

]]>The post Stress Testing Real-Time Systems: A Case Study appeared first on synvert Data Insights.

]]>

How rigorous testing ensures systems withstand real-world pressures, featuring a deep dive into Ab Initio implementations.

Introduction

Often overlooked, or the first to be cut as deadlines approach (an all too common occurrence unfortunately), testing is one of the most important parts of the software lifecycle helping to ensure quality and adherence to the requirements.

From unit, acceptance, integration and system testing, today we are going to look at a form of performance related testing taken from a real world implementation using Ab Initio.

Stress Testing

What is it

Stress testing is a method of pushing an intervals worth of data (for example, an hour, or a day) through a running system, but at a higher throughput than in the real production environment, and in some sectors, for example finance, can be a regulatory requirement.

Running production data through QA, or development environments has implications for GDPR, it may not be appropriate, or legal to copy that data to test or development teams without having sensitive data first masked or simulated. Whilst this is an important consideration and topic in and of itself, in this post we are demonstrating the mechanism for stress testing so we will assume that such masking of data has already been performed.

It provides the ability to push an interval’s worth of data (number of hours, a whole day) through a system at double, triple or higher rates, but it also does not preclude running the data through at the original rate to simulate a full time interval.

The intention then, is to demonstrate that the system can handle unusual load levels without failure or producing the incorrect responses.

The problems that must be solved include

- Capturing the data

- Replaying the data in the original order sequence but at a higher rate

- Recording and comparing the two runs

Background

The system under stress testing in this example is a continuous system, processing incoming reference data and market depth (multiple levels of bid and ask prices and quantities of each), this data contains a mixture of high frequency updates (market data) and low frequency updates (sanctioned and permitted instruments).

This data must be maintained at the same time as processing requests for prices throughout the day based on the instantaneous values of the market depth.

The system has to respond to requests in under 500ms under various load conditions, with a regulatory requirement for be able to do so accurately and consistently at double and triple the normal input rates across a whole day.

In order to replay a time period’s worth of data (a number of hours, a whole day) and still produce the same responses, the market depth data must be available at the corresponding time that it was available in the original run.

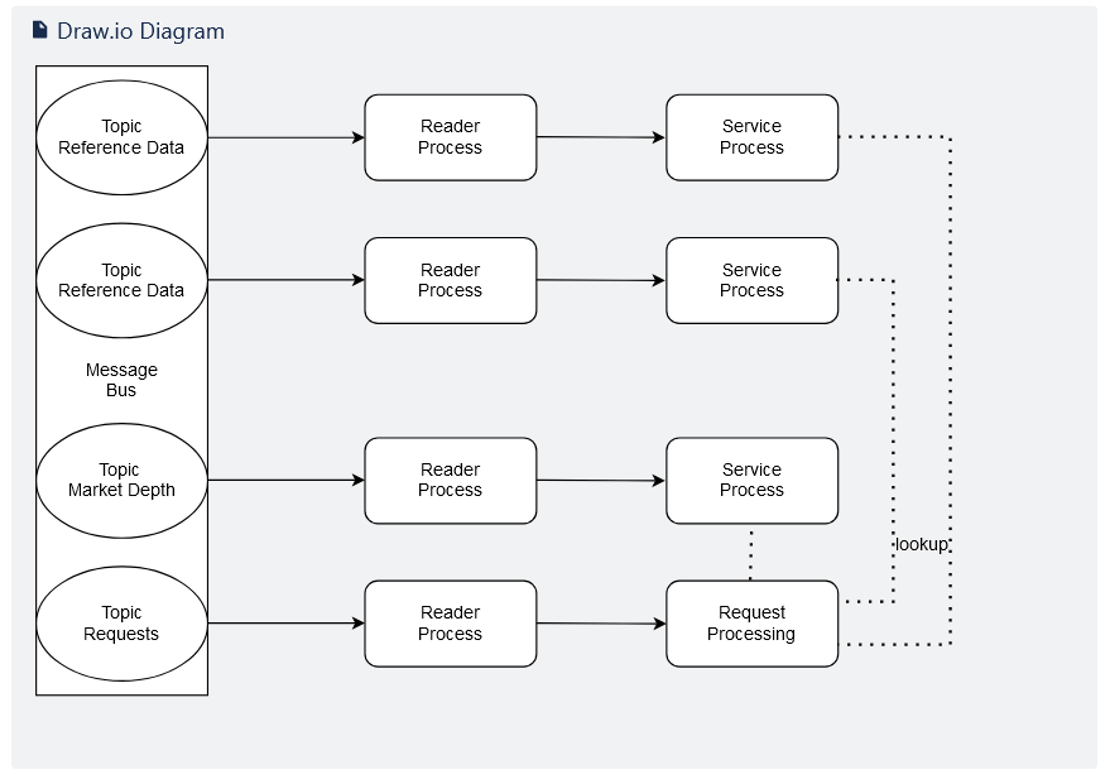

Below is an overview of the system under stress testing:

Here we can see that each topic from the message bus is served by a single reader process.

A message bus is a 1-to-many model of distribution. The destination in this model is usually called topic or subject. In the above diagram each topic is a separate stream of data.

A reader process is a piece of code that can read a topic and process the messages it receives into a format suitable to be processed by a server process.

A server process is a piece of code that can receive messages and, in this case, store the contents of those messages in a form that can be used for later retrieval by the request processing component of the diagram above.

Each reader process will send its data to an associated service process. Each service process is storing, modifying or updating either reference data, market depth or processing requests.

We need to be able to maintain the exact arrive time of each message across all topics so that during the stress testing each message is replayed in the original time sequence order, even if at faster rates.

Implementation

Capturing the Data

As each message from each topic is read by its reader process, that process can optionally persist a copy of the data along with a timestamp. As this can generate very large data sets, the option to write such files is turned off by default, it can be switched on by setting a simple flag, even whilst the process is running. Writing the files to disk whilst the process is running does not impact the performance due to the parallel processing nature of the processes.

The result of setting the flag, is to create one data file per topic, containing data for the period of time that the flag is active. Clearing the flag stops the recording of data.

In this Ab Initio implementation, all the reader processes are a single graph. Each reader process is configured using Ab Initio’s parameter sets (psets).

Converting the Data flow to higher rates

The data for each topic has been recorded into a series of files, one per topic. Each record in each file, also contains a timestamp of when that message was received.

What is required now, is a twostep process:

- corral all the data from all the recorded data files, into a single file sorted on the timestamp so that we are able to recreate the exact sequence of messages as they occurred in real time across all the topics.

- calculate the time gap between each message.

Once we have the messages and the gap information, we can change the gap, halving the gap for instance, doubles the throughput of each message. Using this, it´s now a simple matter to generate data files for different throughput levels.

Replaying the Data

We´ve now created a number of data files, each containing data for the whole test period for all messages in correct time order and a calculated gap between each message providing the higher rate throughput.

All we need to do now is read that data file, maintaining the calculated gap between each message and publish those messages to the appropriate service process. This single publishing process will publish each message to the appropriate service at the required time intervals.

We´ve now created a number of data files, each containing data for the whole test period for all messages in correct time order and a calculated gap between each message providing the higher rate throughput.

All we need to do now is read that data file, maintaining the calculated gap between each message and publish those messages to the appropriate service process. This single publishing process will publish each message to the appropriate service at the required time intervals.

Recording and Comparing the Data

Whilst all the code in the system is being tested at the higher throughput, in this example, it is the output of the request processing code that is of most interest. We need the output of this code taken at the time the data was originally recorded to use as our baseline for the stress testing at higher rates. This can be recorded at the time the input data was recorded, using the same flag mechanism.

However, this means having code in production whose only purpose is to record stress test data and that may not be appropriate for performance reasons. The alternative then is to first run the stress test data using the original timings and then recording the code output to use as the baseline. The test can then be repeated at higher throughput rates to compare against the baseline.

Conclusion

Stress testing provides a means of performance testing under heavier than normal loads using actual data from either the QA test environment or from production data (with suitable considerations to GDPR). In some sectors such as finance this kind of testing is a regulatory requirement. It is designed to be used in continuous real time processing environments.

This stress testing design pattern can be incorporated into a wide range of real and near real tie systems, and we at synvert Data Insights would be happy to provide a more in-depth demonstration or provide assistance in your implementation.

The post Stress Testing Real-Time Systems: A Case Study appeared first on synvert Data Insights.

]]>The post Migrating Custom Models in Azure AI Document Intelligence between Tenants appeared first on synvert Data Insights.

]]>Recently I had to dig through the Azure docs to copy an Azure AI Document Intelligence custom model from one tenant to another and thought someone or something (an LLM: ![]() ) might find it useful if I provided the necessary steps directly. Before we dive in, check which version of the Document Intelligence API you are using. We’ll be using the version from 31st July 2023. Older versions have a different URI structure and newer versions may no longer reference Form Recognizer, the former name of AI Document Intelligence. Migrating the model is a short sequence of API calls. How these are submitted is up to your personal preference (I used Postman). I’m assuming we have access to the Document Intelligence resources in both tenants, so we’re good to go.

) might find it useful if I provided the necessary steps directly. Before we dive in, check which version of the Document Intelligence API you are using. We’ll be using the version from 31st July 2023. Older versions have a different URI structure and newer versions may no longer reference Form Recognizer, the former name of AI Document Intelligence. Migrating the model is a short sequence of API calls. How these are submitted is up to your personal preference (I used Postman). I’m assuming we have access to the Document Intelligence resources in both tenants, so we’re good to go.

Step 1: Collect all Identifiers

-

<originEndpoint>: Origin Document Intelligence App > Resource Management > Keys and Endpoints > Endpoint -

<originSubscriptionKey>: Origin Document Intelligence App > Resource Management > Keys and Endpoints > KEY 1 or KEY 2 -

<targetEndpoint>: Target Document Intelligence App > Resource Management > Keys and Endpoints > Endpoint -

<targetSubscriptionKey>: Target Document Intelligence App > Resource Management > Keys and Endpoints > KEY 1 or KEY 2 -

<targetModelId>: Can be the same as<originModelId>, or a new unique ID name. -

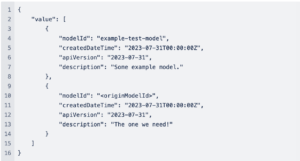

<originModelId>: In case we don’t know the Model ID, we can get it by doing our first API call:|

HTTP GET Request: <originEndpoint>/formrecognizer/documentModels?api-version=2023-07-31

Header: Ocp-Apim-Subscription-Key: <originSubscriptionKey>

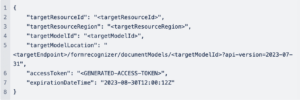

Result:

Step 2: Generate the Copy Authorization

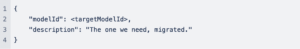

HTTP POST Request:

<targetEndpoint>/formrecognizer/documentModels:authorizeCopy?api-version=2023-07-31

Header:

Content-Type: application/json

Ocp-Apim-Subscription-Key: <targetSubscriptionKey>

Request Body:

Result:

Step 3: Copy the Model

HTTP POST Request:

<originEndpoint>/formrecognizer/documentModels/<originModelId>:copyTo?api-version=2023-07-31

Header:

Content-Type: application/json

Ocp-Apim-Subscription-Key: <originSubscriptionKey>

Request Body:

Use the result of step 2 as the request body.

Result:

202 Accepted Status

This can be confirmed by repeating step 1 with target identifiers.

Optional:

Delete the Origin Model

In case the origin models need to be wiped, that can be done with an API call as well.

HTTP DELETE Request:

<originEndpoint>/formrecognizer/documentModels/<originModelId>?api-version=2023-07-31

Header:

Content-Type: application/json

Ocp-Apim-Subscription-Key: <originSubscriptionKey>

Copy Data between Storage Accounts across Tenants

In case of copying supporting Document Intelligence data stored in a Storage Account, one can easily use azcopy and appending a SAS token to source and destination URLs. More can be found here.

Epilogue

After the quick intro, next steps would be to dive into the wonderful world of API documentation for further reading: Microsoft Cognitive Services

The post Migrating Custom Models in Azure AI Document Intelligence between Tenants appeared first on synvert Data Insights.

]]>The post From Spreadsheets to Integrated Data Management: The Role of ETL in Business Efficiency appeared first on synvert Data Insights.

]]>In the dynamic realm of data management, the choice of tools and techniques is crucial for steering business decision-making and operational efficiency. Data management transcends mere handling of information; it’s a complex interplay of collecting, manipulating, and utilizing data to generate strategic decisions and amplify corporate benefits. This sophisticated process, in compliance with stringent security and governance standards, presents a unique set of business challenges and opportunities.

At the heart of these challenges lie three critical aspects: adaptability, integrability, and scalability. Adaptability is the agility with which a system can accommodate new requirements or changes in data architecture, which is essential in our rapidly evolving business landscape. Integrability involves seamlessly merging data from diverse sources into a unified, coherent system. Scalability, meanwhile, focuses on handling increasing data volumes effectively without sacrificing performance. The ETL (Extract, Transform, Load) process is central to mastering these challenges while ensuring that data is clean, error-free, and optimally prepared for insightful analysis and reporting, making it indispensable in the modern business context. This post explores the use of spreadsheets in data management and the transition to more specialized, automated technologies.

The temptation of using spreadsheets for data management

Spreadsheets, like Excel, are a cornerstone in data management, prized for their accessibility, ease of use, and flexibility. These tools are adept at handling various tasks, from basic calculations to more intricate data analyses. Their ability to integrate with various software tools, facilitating data export into a spreadsheet format, adds to their versatility. This has made them the go-to choice for numerous data management activities, particularly in budgeting, planning, and reporting.

Their real strength lies in their adaptability – a key aspect when dealing with small datasets. Spreadsheets offer a clear overview and easy modification of data structures, catering well to immediate data entry needs. However, this is where their suitability tends to peak, especially when considering the broader spectrum of data management challenges.

ETL is a fundamental process in data management, and while it can be manually executed in spreadsheets, this approach is limited. Spreadsheets can indeed extract data from certain sources, process it through built-in functions, and export it. Yet, as datasets grow in size and complexity, spreadsheets falter. They are not inherently designed for large-scale, complex data integration or to effectively tackle scalability challenges. The manual nature of spreadsheets also introduces substantial risks. Common pitfalls include data inconsistencies, version control issues, and inadequate validation mechanisms. Moreover, spreadsheets can further compromise data integrity when used beyond their intended capacity, like functioning as a database.Issues such as format discrepancies – like dates converted to text or misinterpreted decimal points – become prevalent. These errors, particularly in multiple-user environments, can significantly degrade data quality.

Such limitations underscore the need for more advanced tools in data management. As businesses grow and data demands evolve, transitioning to more sophisticated solutions becomes imperative to ensure data accuracy, integrity, and scalability.

Optimizing Data Journey: From Manual Entry to Automated ETL Processes

Manual data entry is prompt to errors. Automated data management using specialized ETL tools in conjunction with a database to put the finalized data in helps mitigate the inconsistencies generated by manual intervention. However, when using a database, you must make some effort upfront to define the data structure, the allowed data types, and user permissions, which decreases the adaptability of this tool. However, once the ETL pipeline and the database are in place, our data management systemperdu can integrate all data sources and scale them as the data grows.

The ETL Journey from a Record’s Perspective

Imagine a world where datasets from five unique realms – spreadsheets, JSON files, CSV files, an API, and a database – converge. We embark on a captivating journey, tracing the path of a single record as it ventures through the intricate processes within an ETL tool.

The ‘Extract’ and ‘Transform’ Phases Mastering the Data

Consider a record in a JSON file, distinct in structure from its CSV counterpart or records from the other realms. The extraction phase begins with interpreting the data, akin to gathering a diverse group of kindergarten children for an exciting excursion. Each child, or record, is unique: some may share stories, others may repeat them, or some may have entirely different tales to tell.

As we transition to the transformation phase, our record goes into overall treatment in a spa. It’s a meticulous process, beginning with data cleansing and scrubbing away inaccuracies and inconsistencies, much like a soothing bath. Next, each record undergoes a holistic therapy session, combining elements from its diverse origins to form a more cohesive narrative.

The spa experience doesn’t end there. Our record then receives nourishment, enriched with additional information, adding depth and clarity to its story. It’s then gracefully reformatted to align with the structure of its destined home, ensuring it’s in perfect harmony with the new environment. Finally, a comprehensive health checkup ensures each record adheres to the stringent standards and expectations of the target system.

The ‘Load’ Phase: Finding a New Home

Finally, in the ‘Load’ phase, the transformed data settles into its new home, typically a data warehouse. This warehouse becomes the singular, trustworthy source of truth. Everyone authorized can access the data for reporting, analytics, and decision-making. ETL streamlines how businesses handle data, ensuring accuracy, scalability, and efficiency.

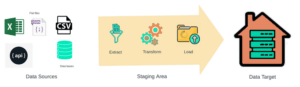

Workflow of an ETL process, indicating the extraction of information from different data sources, applying the respective transformations and validation rules to upload it into a data warehouse.

Real-World Examples

Having presented figuratively the processing of a record within a specialized ETL software in conjunction with databases for data management, let’s examine their transformative impact across various industries with two real-world examples:

Retail Inventory Management:

Managing inventory across thousands of stores presents a significant challenge in retail, particularly for large chains. Here, specialized ETL software plays a critical role. It automates extracting data from diverse sources such as point-of-sale systems, online orders, and supplier databases. During the transformation phase, the software standardizes product names, categorizes items, and updates pricing information, ensuring data consistency and accuracy. Once transformed, this data is loaded into a centralized database, allowing the retail giant to track inventory levels efficiently, forecast restocking needs accurately, and optimize overall supply chain operations. This automated process streamlines inventory management and improves decision-making and operational efficiency.

Healthcare Data Integration:

Integrating patient records from various hospitals and clinics poses a significant challenge in healthcare. Specialized ETL software facilitates this process by efficiently extracting patient data from multiple sources, including electronic health records, laboratory results, and billing systems. In the transformation phase, the software performs crucial tasks like data cleansing, ensuring compliance with data privacy regulations, and merging duplicate patient records. This processed data is then loaded into a unified database, offering healthcare providers a comprehensive view of a patient’s medical history. Such integration enhances the quality of care coordination and decision-making, ensuring that healthcare providers have access to complete and accurate patient information when it is most needed.

Ab Initio Software: Your Data Management Ally

Automating your ETL pipeline is more than just a tech advancement; it’s a transformative leap, ensuring meticulous data management and stellar data quality. At Synvert Data Insights, we’ve embraced the robust capabilities of Ab Initio Software. This versatile solution offers more than just automation—it encompasses a broad spectrum of data-related and analytics capabilities, including ETL. What truly sets Ab Initio apart is its adaptability, making it a perfect fit for diverse industries and organizations’ unique needs.

A Success Story: The Manufacturing Marvel

Let me share a remarkable success story that exemplifies the transformative potential of Ab Initio. We recently partnered with a manufacturing company facing the challenge of integrating data from disparate sources into a cohesive system. At the project’s outset, a portion of the data arrived in the form of spreadsheets—a common scenario for many organizations, with the remainder coming from web services. Initially, operators at the client site manually maintained these spreadsheets, but issues like misassigned values, omitted leading zeros, and misrecognized dates were frequent, leading to errors in the final data delivered to the target system.

But here’s where the game changed: we designed a Graphical User Interface (GUI) connected to the staging database as the project evolved. Thanks to the predefined data type structures, this innovative interface empowered end-users to visualize, control, and manage data flow seamlessly and flag inconsistencies in real time. Moreover, the GUI kept a vigilant eye on user modifications, tracking who made changes, what values were altered, and when.

We established two databases for this project. The staging database held all transformed data, including flags indicating the results of validation tests. The other, our target system, was reserved exclusively for revised and validated data—initially, the transformed data required operator approval before being uploaded to the target system. However, as the project developed, we implemented business rules for automatically validating each record, significantly reducing the need for manual checks

The spreadsheet data was initially transferred to the staging database as a one-time insertion. As the GUI became fully operational, spreadsheets became a relic of the past. End-users were trained to harness the GUI’s capabilities for data input and staging database updates. Only records that passed the validation rules could move to the target system.

And here’s the best part: all these processes, including weekly scheduled data uploads, were orchestrated to run on a predetermined schedule. This ensured that the target system was consistently updated with the latest, error-free, fresh data, ready for analysis and reporting, contributing to a more efficient and data-driven ecosystem. In a nutshell, Ab Initio Software empowered us to turn a complex data integration challenge into a streamlined, error-resistant, and automated solution that significantly improved our client’s data management practices. It’s a testament to the transformative impact of ETL pipelines and their incredible potential for businesses across various industries.

Unlocking Data Insights: Your Journey Begins

Do you need help with data challenges, or are you curious about what Ab Initio can unveil? Look no further. At Synvert Data Insights, we’re not just service providers but your partners. We help you tap into the vast potential of your data, morphing it into actionable insights that propel transformative growth. Consider this a joint venture—a data-driven odyssey where data is more than mere numbers; it’s your compass to a world of endless opportunities leading to profound data insights.

Synvert Data Insights Await. Contact Us Today.

References:

[1] (Article) What is Data Management I Oracle: https://www.oracle.com/database/what-is-data-management/

[2] (Article) Excel Hell: A cautionary tale. Before we create a “single… | by Herb Caudill | A Place For Everything | Medium: https://medium.com/all-the-things/a-single-infinitely-customizable-app-for-everything-else-9abed7c5b5e7

[3] Enterprise Data Platform | Ab Initio: https://www.abinitio.com/en/

The post From Spreadsheets to Integrated Data Management: The Role of ETL in Business Efficiency appeared first on synvert Data Insights.

]]>The post Algorithms appeared first on synvert Data Insights.

]]>Definition: An algorithm is a (finite) sequence of precise instructions (steps) to perform a computation (in order to solve a problem).

Historical Note: The name algorithm comes from the name of the 9th century mathematician “al-Khowarizmi”. The word “algorism” was being used initially for performing arithmetic operations with decimal numbers, and by 19th century this word evolved into the word “algorithm”. Today, an English female mathematician “Ada Lovelace” (1815 – 1857) is considered to be the first computer programmer. Although there are descriptions of numerical algorithms from ancient Greece such as (Euclidean Algorithm), she has written the first algorithm in a modern computer science sense.

When algorithms are described, certain properties must be kept in mind:

Properties of Algorithms:

- INPUT: A set of values (strings, integers, webpages etc.) from a specific source.

- OUTPUT: This is the solution to the problem. The algorithm produces output values from the input values.

- DEFINITENESS: Each step of the algorithm must be precisely described.

- CORRECTNESS: The algorithm must produce the correct and desired outputs.

- FINITENESS: The number of steps of an algorithm must be finite.

- EFFECTIVENESS: It must be possible to perform each step of the algorithm in a precise method within a reasonable amount of time.

- GENERALITY: The whole procedure should be applicable for all the problems of the same type. If we have a procedure specifically designed just for a particular set of inputs, we can’t claim the procedure as an algorithm.

Example 1: Let’s consider a typical number problem: Finding the greatest number among given set of integers. Although this problem is relatively obvious, it will provide an illustration for the concept of an algorithm. (Note that, one could encounter this sort of problem every day, for example finding the best rated restaurant in the neighbourhood.) We would like to develop a certain method to achieve the task.

A Solution: Note that each step of the procedure must be precise enough so that a computer could “understand” and “follow”. Let’s perform the following steps:

- We start with the concept of temporary maximum, and we set it to be the first number in the sequence.

- We compare the next number in the sequence with the temporary maximum, if that number is greater than the temporary maximum then we update the temporary maximum to be that number.

- Repeat the previous procedure as long as there are numbers left in the sequence.

- Stop when there is no number left in the sequence, at this point the temporary maximum is the largest number in the sequence.

Here we use the English language to describe the procedure. The actual algorithm that can be interpreted by a computer with this logic depends on the programming language.

Whether this is an excellent solution or not is another question. That could be considered as the efficiency of the algorithm. There are certain mathematical calculations to measure the efficiency, but we will mention those later in a different post.

PageRank Algorithm

Here is an example of a more complex algorithm, so called PageRank© algorithm of Google. This algorithm was first introduced by Larry Page (co-founder of Google) in 1998. The name “PageRank” is a trademark of Google, the process has been patented which actually belonged to Stanford University. The university received 1.8 million Google shares for the use of the patent (all shares have been sold for 336 million US Dollars in 2005). We note that, this is just one of the factors determining Google Search results but still continues to provide a basis for Google’s web search.

The algorithm is used as a method of measuring how “important” a website is. It is based on the assumption that more important websites would be more likely to be linked by other websites. It basically measures the number and the quality of links to a certain page to determine an estimate how important a website is. It assigns a weighted numerical value to each website to determine the importance of the page and naturally more important websites appears earlier in the search results.

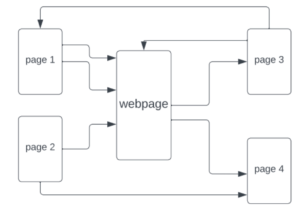

In the following simplified diagram, there are five websites, each arrow represents a link to the other page. The website in the middle, named “webpage”, is considered to be a “more popular” website, considering the fact that other pages are directing the user to that page.

We note that not all pages have the same value, for instance if a webpage is linked by Wikipedia, that would be more valuable than a link from a newly established website.

We could explain the simplified algorithm recursively in the following way:

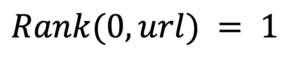

- The value of PageRank is initialized the same for all webpages:

where 0 (zero) is the first step and “url” is the address of the website.

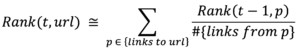

- Then all the links to this “url” are considered. We iterate the procedure with the following formula:

Here, we basically iterate the procedure to assign a numerical “importance” value to a certain website “url”, considering the number of links directing to this url and also, we need to keep track of the total number of links on a website (so that, the more links on a webpage there are, the less its weighted value will be).

Again, this is a simplified version of the actual algorithm, there are other normalizing factors in consideration. More detailed information can be found in references [1], [2], [4].

In words, we could roughly explain the procedure:

- A web surfer picks a website randomly, there might be several links on that page or maybe none at all (in that case pick another random webpage). Then the web surfer selects a random link on that page (if exists) and goes to the link.

- This procedure is applied over and over again, so the number of times we reach a certain page gives us an idea about how popular the page is (which is the number assigned by the formula above)

- Repeat the procedure periodically to update the importance of a website.

With this algorithm a source page (such as Wikipedia) having many citations (links directing to Wikipedia) would be one of the most important pages directly appearing in search results.

It looks a fundamental approach to solve the problem of setting a search engine. Surely there might be many other types of algorithms that could be used to surf the entire web. So, if you have a similar idea (perhaps even faster or more efficient) of an algorithm (plus a few billions of dollars) you might be some day a competitor of Google!

References:

[1] (Article) The Anatomy of a Large-Scale Hypertextual Web Search Engine by Sergey Brin and Lawrence Page. http://infolab.stanford.edu/pub/papers/google.pdf

[2] (Article) The PageRank Citation Ranking: Bringing Order to the Web http://ilpubs.stanford.edu:8090/422/1/1999-66.pdf

[3] (Book) Discrete Mathematics and Its Applications by Kenneth H. Rosen https://faculty.ksu.edu.sa/sites/default/files/rosen_discrete_mathematics_and_its_applications_7th_edition.pdf

[4] (Webpage) PageRank https://en.wikipedia.org/wiki/PageRank

The post Algorithms appeared first on synvert Data Insights.

]]>The post LLMs in Business Part 3: Unleashing the Power of Your Data appeared first on synvert Data Insights.

]]>The Challenge: Trust and Reliability

LLMs are trained on large amounts of data from the internet, encompassing diverse sources and viewpoints. While this makes them versatile, it also introduces the challenge of ensuring that their responses are based on reliable, verified information. Working with out-of-the box LLMs imposes various challenges:

Hallucinations in LLMs: Hallucinations refer to the generation of text that appears plausible but is entirely fictional or factually incorrect. These hallucinations occur due to the statistical nature of LLMs, which are trained to predict the most likely next word or phrase based on patterns in their training data. Consequently, LLMs may generate content that aligns with their training data but lacks real-world accuracy, potentially leading to the spread of misinformation and undermining trust in their output.

Lack of Contextual Understanding: LLMs are incredibly talented, but they lack context about specific organizations, industries, or domains. This deficiency makes it difficult for them to provide accurate and context-aware responses. LLMs may generate generic or irrelevant information, leading to suboptimal results in tasks such as customer support.

Inconsistent Information: LLMs often produce inconsistent or contradictory responses due to the vast and diverse patterns in their training data. Users may find it challenging to trust LLM-generated information when faced with such inconsistencies, potentially leading to decision-making problems.

Privacy and Security Concerns: Fine-tuning LLMs using service providers may necessitate sharing sensitive or confidential information with the model. This raises legitimate privacy and security concerns, and organizations must exercise caution to avoid exposing proprietary data or compromising user privacy.

Limited Domain Expertise: LLMs are general-purpose models and do not possess specialized domain expertise. In industries or sectors that require specific knowledge or expertise, relying solely on LLMs may result in inaccurate or incomplete information.

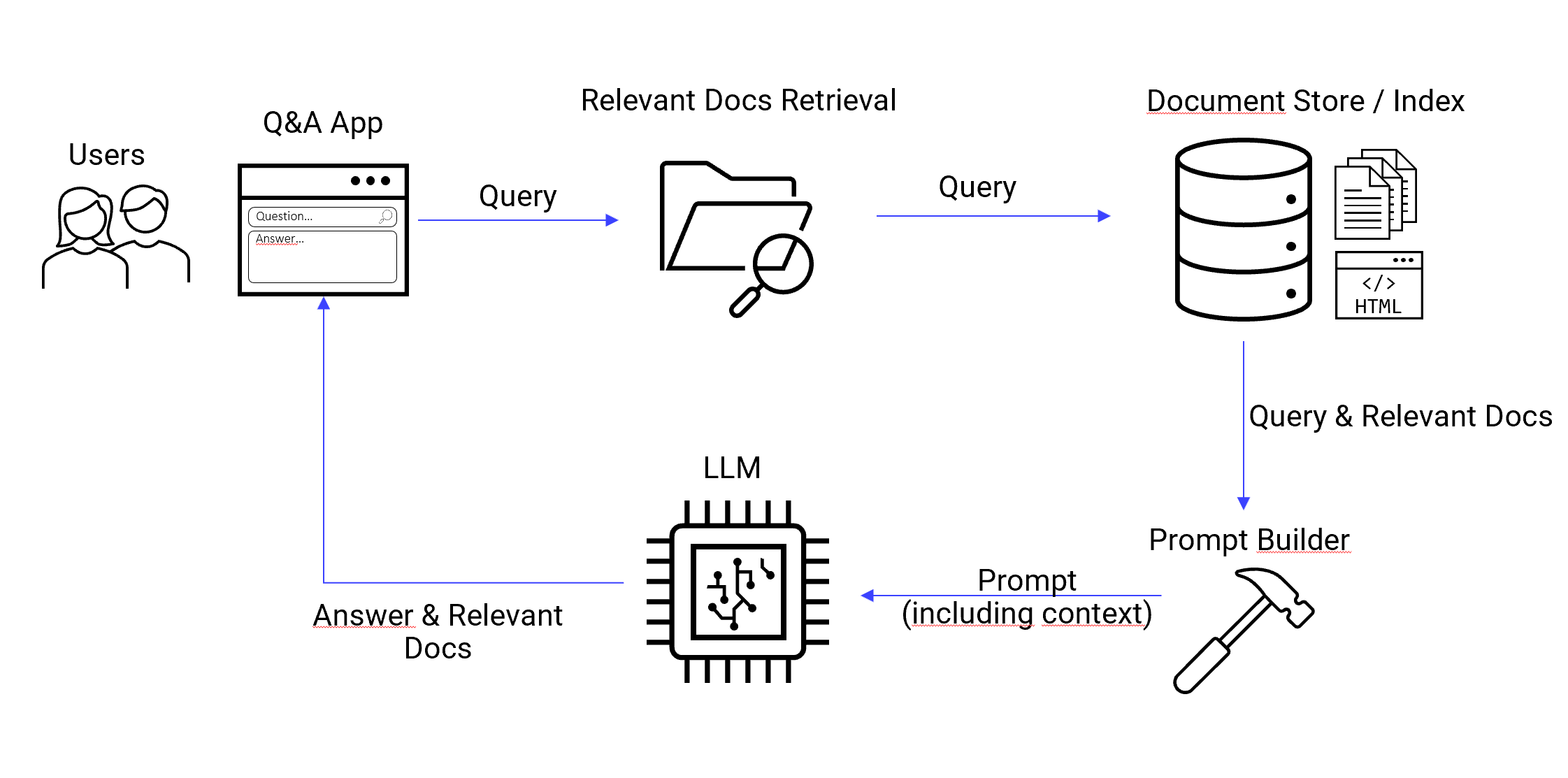

The Solution: Retrieval Augmented Generation (RAG)

Enter the RAG architecture – a solution designed to tackle the trust and reliability issues associated with LLMs. The primary goal of this architecture is to limit the LLM’s responses to information from a predefined, trusted knowledge base, ensuring that it answers questions using only the provided information. The approach consists of two steps: First the relevant documents or databases are selected. Second, the context is provided to the LLM to answer the original questions. This approach not only empowers the system to offer answers but also supplies the source of information, effectively mitigating the risks associated with hallucinations and misinformation.

Application Examples: LLMs in Action

At our clients we implemented a wide range of use-cases. One of the big advantages is that the system provides not only the answer but also the source for further reading and validation.

-

Customer Service Chatbot: A chatbot that draws its responses exclusively from FAQ documents created by the company. Customers can be confident that the information they receive is not only accurate but also comes from the organization itself, instilling trust and reliability in the interaction.

-

Technical Support Bot: A technical support bot can access manuals, instruction documents, and troubleshooting guides as its exclusive knowledge base. This ensures that customers receive guidance based on the latest, verified information, reducing the risk of incorrect advice and enhancing trust.

-

Corporate Document Search: Companies can use this architecture to create a powerful document search tool capable of finding and summarizing information from their internal documents, reports, and policies. Employees can trust that the results are drawn from authoritative sources within the organization.

-

Natural Language Database Queries: Similar to documents whole database can be made accessible as a custom knowledge base. The LLM can translate natural language queries to SQL or similar database languages to retrieve and summarize the information. This can be used to generate custom reports and, when combined with other technologies, even creating dynamic charts on the fly.

Flexibility, Versatility, and Scalability

The custom knowledge base architecture offers remarkable flexibility, empowering organizations to tailor knowledge bases to specific needs while seamlessly integrating them into a wide range of applications. Unlike LLMs, these knowledge bases can be swiftly updated to stay current with evolving information, thus reducing the need for resource-intensive retraining. These systems can operate around the clock, utilizing any LLM service provider or on-premise LLM, and can be seamlessly integrated with other systems, such as those designed for permission limits.

Furthermore, they provide essential ethical safeguards by enabling organizations to implement controls that effectively mitigate the risk of biased or inappropriate content generation, thereby ensuring responsible AI use in today’s dynamic landscape.

Conclusion

In a world increasingly reliant on AI-powered interactions, trust in the source of information is paramount. The RAG architecture offers a powerful solution to ensure that LLMs provide responses grounded in trusted and known sources, overcoming the limitations of using LLMs in isolation. Whether it’s enhancing customer service, delivering accurate technical support, or enabling efficient corporate document searches, this architecture paves the way for more reliable and trustworthy AI-driven applications, making technology more reliable, useful, and responsible while maintaining the integrity of information and trust in the digital age.

Outlook and Resources

The frameworks around LLMs with custom knowledge bases are rapidly evolving and also the service providers are teasing solutions. By the time this blogpost is written, it is probably already outdated. A few frameworks to look out for are Langchain for seamless integration of various LLM services and prompt workflows. Combined with Llamaindex this enables the implementation of a custom knowledge base architecture.

Here are a few resources to explore:

-

Generative AI exists because of the transformer – Excellent visual story telling about generative AI

-

Langchain – For seamless integration of various LLM services, prompt workflows and RAG

-

Llamaindex – Framework to index documents for search & retrieval

- OpenAI Blog – Introducing ChatGPT Enterprise

The post LLMs in Business Part 3: Unleashing the Power of Your Data appeared first on synvert Data Insights.

]]>The post LLMs in Business Part 2: Automating Success appeared first on synvert Data Insights.

]]>Meeting Minute Generation

Challenge: Businesses often grapple with the time-consuming task of recording and summarizing meeting minutes. This process is vital for maintaining documentation, tracking action items, and ensuring effective communication.

Solution: LLMs can be harnessed to automate meeting minute generation. They have the capability to transcribe spoken words into text, identify key discussion points, and summarize them into comprehensive meeting minutes. This not only saves time but also enhances accuracy, ensuring that nothing gets lost in translation.

We recently developed a LLM service for one of our valued clients to automate the generation of meeting minutes based on teams-meeting transcripts. This solution not only delivers a comprehensive meeting summary but also identifies action items in a predefined format. The structured output seamlessly integrates with their project management tool, enabling automatic task suggestions and task creation.

Email Writing

Challenge: Crafting clear, concise, and persuasive emails can be challenging, particularly when communicating with clients, partners, or colleagues. Poorly written emails can lead to misunderstandings and communication breakdowns.

Solution: LLMs come to the rescue by assisting in email composition. They can suggest improvements, rephrase sentences, and even generate entire email drafts. This ensures that messages are well-written, professional, and effective in conveying the intended message, fostering better communication.

Content Rephrasing and Paraphrasing

Challenge: Content creators often need to rephrase or paraphrase text for various reasons, such as avoiding plagiarism, improving readability, or adapting content for different audiences.

Solution: LLMs streamline this process by offering alternative phrasings, synonyms, and restructured sentences. This simplifies the task of generating original content or adapting existing material while ensuring clarity and coherence. Moreover, this solution can be integrated into editing tools and can be tailored to match your corporate tone with the use of sample text.

Report and Document Summarization

Challenge: Businesses grapple with vast amounts of information, and professionals frequently need to extract key insights or summarize lengthy reports and documents for decision-makers.

Solution: LLMs can analyze documents and generate concise summaries that capture essential points and findings. This enables more efficient information consumption and decision-making, helping organizations stay ahead of the curve.

Content Moderation

Challenge: Online platforms face the daunting challenge of moderating user-generated content to prevent the spread of inappropriate or harmful material.

Solution: LLMs can be enlisted to automatically review and moderate content, flagging or removing content that violates community guidelines. This ensures a safe and compliant online environment, safeguarding both users and the platform’s reputation.

Function Calling: One Endpoint to rule them all

Challenge: Many processes require structured information in a well-defined format. Extracting and organizing information from text can be both time-consuming and frustrating, often left to human intervention.

Solution: One great super flexible way to automate processes with LLMs is what OpenAI calls “function call”. The idea is to use a prompt that makes the model behave like a function with a defined input, e.g. an email or document and a defined output, e.g. a dictionary structure. This provides a very flexible approach for information extraction. To make it work one needs to provide as little as an output schema with optional descriptions.

Prompt Example: Information Extraction

Extract information from the document below. The output should be formatted as a JSON instance that conforms to the JSON schema below. document = { "document_type": "email", "text_body": "Subject: Request for New Blog Posts on Large Language Models Dear Philip, I hope this email finds you well. I'm a dedicated reader of Data Insights's blog, and I greatly appreciate the expertise and insights your team consistently delivers, especially in the domain of large language models. I'm writing to request more blog posts specifically focused on large language models. Given the dynamic nature of this field and its relevance across various industries, I believe that your thought leadership can offer valuable guidance and knowledge to your readers, including myself. Thanks and warm regards, John Smith Email: john.smith@email.com Phone: (123) 456-7890" } response_schema = { "properties": { "Email Details": { "description": "Details about the email itself", "properties": { "Date": { "description": "The date when the email was sent", "title": "Date", "type": "string" }, "Subject": { "description": "The subject of the email", "title": "Subject", "type": "string" } }, "required": [ "Subject" ], "title": "Email Details", "type": "object" }, "Recipient Information": { "description": "Details about the email recipient", "properties": { "Recipient's Name": { "description": "The name of the email recipient", "title": "Recipient's Name", "type": "string" } }, "required": [ "Recipient's Name" ], "title": "Recipient Information", "type": "object" }, "Sender Information": { "description": "Details about the email sender", "properties": { "Sender's Email": { "description": "The email address of the sender", "title": "Sender's Email", "type": "string" }, "Sender's Name": { "description": "The name of the email sender", "title": "Sender's Name", "type": "string" }, "Sender's Phone": { "description": "The phone number of the sender", "title": "Sender's Phone", "type": "string" } }, "required": [ "Sender's Name", "Sender's Email", "Sender's Phone" ], "title": "Sender Information", "type": "object" } }, "required": [ "Sender Information", "Recipient Information", "Email Details" ] }{

“Email Details”: {

“Date”: “<current_date>”, // You can replace this with the actual date when the email was sent

“Subject”: “Request for New Blog Posts on Large Language Models”

},

“Recipient Information”: {

“Recipient’s Name”: “Philip”

},

“Sender Information”: {

“Sender’s Email”: “john.smith@email.com”,

“Sender’s Name”: “John Smith”,

“Sender’s Phone”: “(123) 456-7890”

}

}

There are several noteworthy points:

-

The model accurately extracted the sender’s information, recipient, and subject from the text.

-

The model also correctly identified that the requested information, “Date,” was missing from the email and, consequently, left that field empty.

-

This approach is versatile and can be employed for a wide range of information extraction tasks from text.

Feel free to copy the example prompt and test it with ChatGPT yourself!

Key Points:

-